AI pipelines & monitoring

SHAPE’s AI pipelines & monitoring service builds production-grade AI systems by tracking model performance, uptime, and drift across the full lifecycle from data ingestion to deployment. This page explains core pipeline concepts, monitoring signals, common use cases, and a step-by-step implementation playbook.

Service page • AI & Data Engineering • AI pipelines & monitoring

AI pipelines & monitoring is how SHAPE helps teams operationalize AI reliably by tracking model performance, uptime, and drift across the full lifecycle: data → training → evaluation → deployment → production observation. We design production-grade pipelines and monitoring loops so models stay accurate, available, and explainable—even as data changes and traffic scales.

Talk to SHAPE about AI pipelines & monitoring

Production AI is a loop: build → deploy → observe → improve. Monitoring closes the loop by tracking model performance, uptime, and drift.

AI pipelines & monitoring overview

What SHAPE delivers: AI pipelines & monitoring

SHAPE delivers AI pipelines & monitoring as a production engineering engagement with one outcome: tracking model performance, uptime, and drift so AI systems remain trustworthy after launch. We don’t stop at “a model that runs”—we build the operating system around it: data reliability, orchestration, release gates, observability, and runbooks.

Typical deliverables

you don’t yet have production AI pipelines & monitoring.

Related services (internal links)

AI pipelines & monitoring work best when data, deployment, and integration surfaces are aligned. Teams commonly pair tracking model performance, uptime, and drift with:

What are AI pipelines (and why monitoring is inseparable)

AI pipelines are the automated workflows that move data from source systems into usable training and inference inputs, then produce, evaluate, and ship models into production. In real organizations, “the pipeline” isn’t one script—it’s a chain of steps with dependencies, failure modes, and governance needs.

AI pipelines & monitoring matters because every step can degrade over time: upstream data changes, distributions drift, schemas evolve, and dependencies fail. That’s why we treat monitoring as part of the pipeline—not an add-on. The objective is continuous tracking model performance, uptime, and drift.

What’s included in a production AI pipeline

How AI pipelines work end-to-end (from data to decisions)

SHAPE designs AI pipelines & monitoring as an end-to-end system. Below is the practical flow that supports tracking model performance, uptime, and drift in production.

1) Source data → ingestion

We start by identifying source-of-truth systems (product events, CRM, support tools, databases, third-party feeds). Ingestion is designed for reality:

2) Transformations → feature-ready datasets

Transformations convert raw data into model-ready inputs. We encode business meaning in a repeatable way so training and inference use consistent definitions. This reduces training/serving skew—one of the most common reasons monitoring alerts “accuracy dropped” after launch.

3) Training + evaluation (build a measurable baseline)

We run training in a reproducible way and compare against baselines:

4) Deployment into serving (online, batch, or streaming)

AI pipelines should support the serving mode your product needs:

5) Monitoring + feedback loop (the part most teams miss)

This is where production reliability is won. We instrument the system so you can continuously track model performance, uptime, and drift and connect signals to real outcomes.

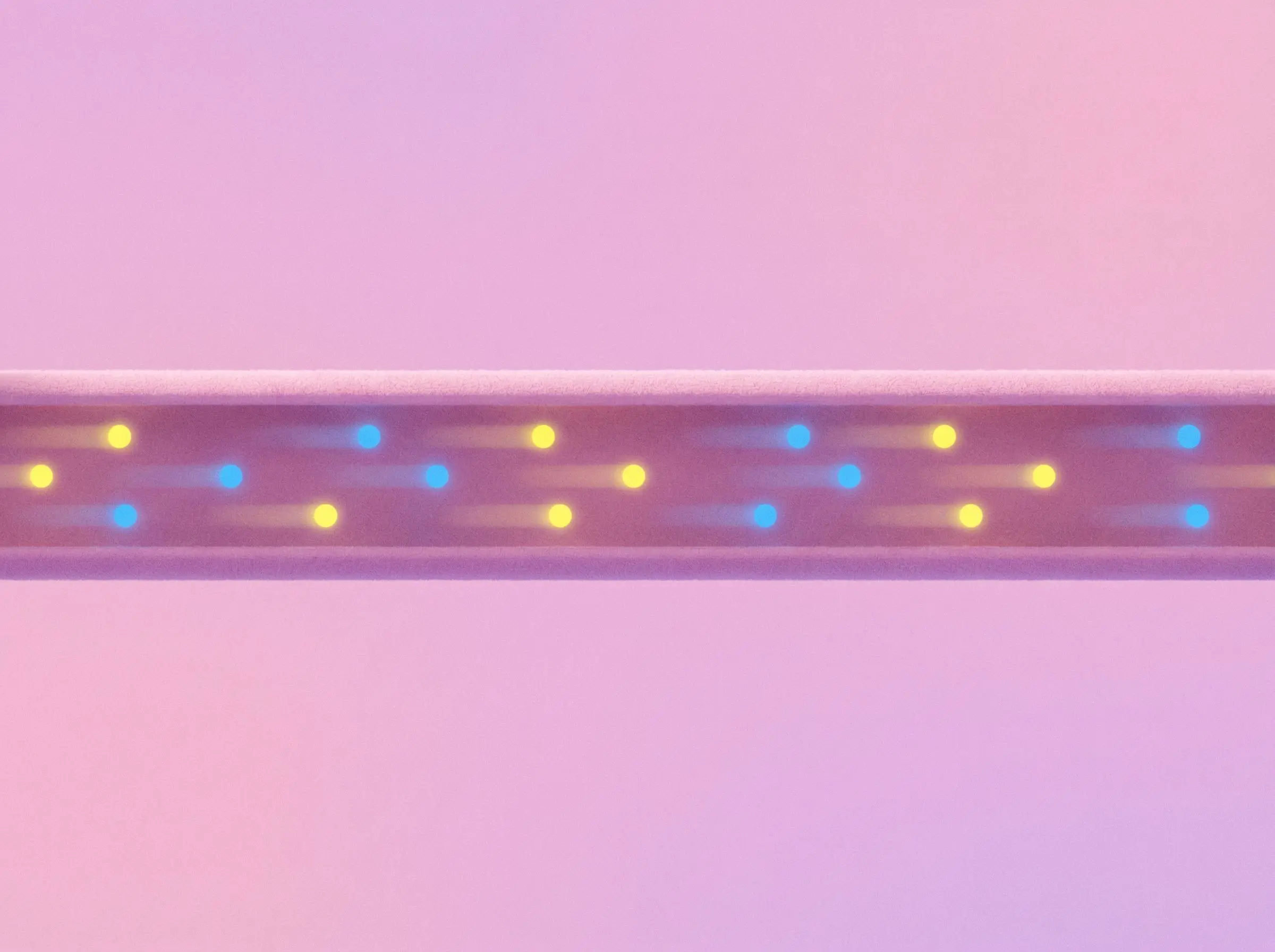

End-to-end AI pipeline lifecycle: data → training → deployment → monitoring → iteration.

Tracking model performance, uptime, and drift: what to monitor

Monitoring is where AI succeeds or quietly fails. SHAPE builds AI pipelines & monitoring to make issues visible early—before customers notice. The core promise is tracking model performance, uptime, and drift in a way that’s actionable.

System health (uptime and reliability)

Data quality monitoring (the fastest leading indicator)

Drift monitoring (data drift + prediction drift)

Model performance monitoring (when ground truth exists)

For many products, “true” outcomes lag. We design a practical approach:

(drift/outcomes). Tracking only one gives false confidence.

Reference architecture patterns for AI pipelines & monitoring

There’s no single architecture for every team. SHAPE chooses the simplest pattern that supports reliability and continuous tracking model performance, uptime, and drift.

Pattern 1: Batch-first pipeline + scheduled evaluation

Best for: forecasting, churn/lead scoring, offline enrichment. Batch-first is often the fastest path to production value with strong monitoring and low operational complexity.

Pattern 2: Online inference service + observability loop

Best for: real-time decisions and interactive product features.

Pattern 3: Streaming pipeline + event-driven inference

Best for: near-real-time detection and systems reacting to events.

Pattern 4: Hybrid (batch training + online serving + streaming signals)

Best for: most production systems. Combine stable batch training with online serving and streaming monitoring signals to improve responsiveness and operational visibility.

If your pipeline requires robust deployment discipline, pair this with DevOps, CI/CD pipelines and Model deployment & versioning.

Use case explanations

Below are common scenarios where teams engage SHAPE for AI pipelines & monitoring—specifically to improve tracking model performance, uptime, and drift.

1) Your model works in notebooks, but production behavior is unpredictable

This usually comes from missing data contracts, unversioned transforms, or training/serving skew. We stabilize the pipeline, define contracts, and add monitoring so behavior is repeatable and deviations are visible.

2) You’re shipping model updates, but regressions slip into production

We implement evaluation gates, shadow/canary releases, and version comparisons so updates are safe. Monitoring makes regressions obvious—and rollback becomes boring.

3) Uptime and latency are blocking adoption of an AI feature

Even a strong model fails if inference is slow or unstable. We set SLOs, instrument traces, implement fallbacks, and build dashboards that tie reliability to user impact—core to tracking model performance, uptime, and drift.

4) Drift is causing silent accuracy decay over time

We implement drift monitoring (feature + prediction drift), add alert thresholds, and create a retraining/recalibration playbook. The goal is early detection and controlled response—not surprises.

5) You need auditability and traceability for high-impact decisions

When decisions affect money, eligibility, or trust, you need traceability. We implement lineage and logging so you can answer: which model/version produced this result, with which inputs, under which conditions.

Start an AI pipelines & monitoring engagement

Step-by-step tutorial: implement AI pipelines & monitoring

This playbook reflects how SHAPE builds AI pipelines & monitoring systems that hold up in production—centered on tracking model performance, uptime, and drift.

This is the core of tracking model performance, uptime, and drift reliably.

Treat every deployment like an experiment: define success metrics, compare versions, and document outcomes. That discipline turns tracking model performance, uptime, and drift into a reliable operating system.

/* Internal note: dashboards should reflect (1) system health, (2) data health, (3) model behavior. */

Who are we?

Shape helps companies build an in-house AI workflows that optimise your business. If you’re looking for efficiency we believe we can help.

Customer testimonials

Our clients love the speed and efficiency we provide.

FAQs

Find answers to your most pressing questions about our services and data ownership.

All generated data is yours. We prioritize your ownership and privacy. You can access and manage it anytime.

Absolutely! Our solutions are designed to integrate seamlessly with your existing software. Regardless of your current setup, we can find a compatible solution.

We provide comprehensive support to ensure a smooth experience. Our team is available for assistance and troubleshooting. We also offer resources to help you maximize our tools.

Yes, customization is a key feature of our platform. You can tailor the nature of your agent to fit your brand's voice and target audience. This flexibility enhances engagement and effectiveness.

We adapt pricing to each company and their needs. Since our solutions consist of smart custom integrations, the end cost heavily depends on the integration tactics.